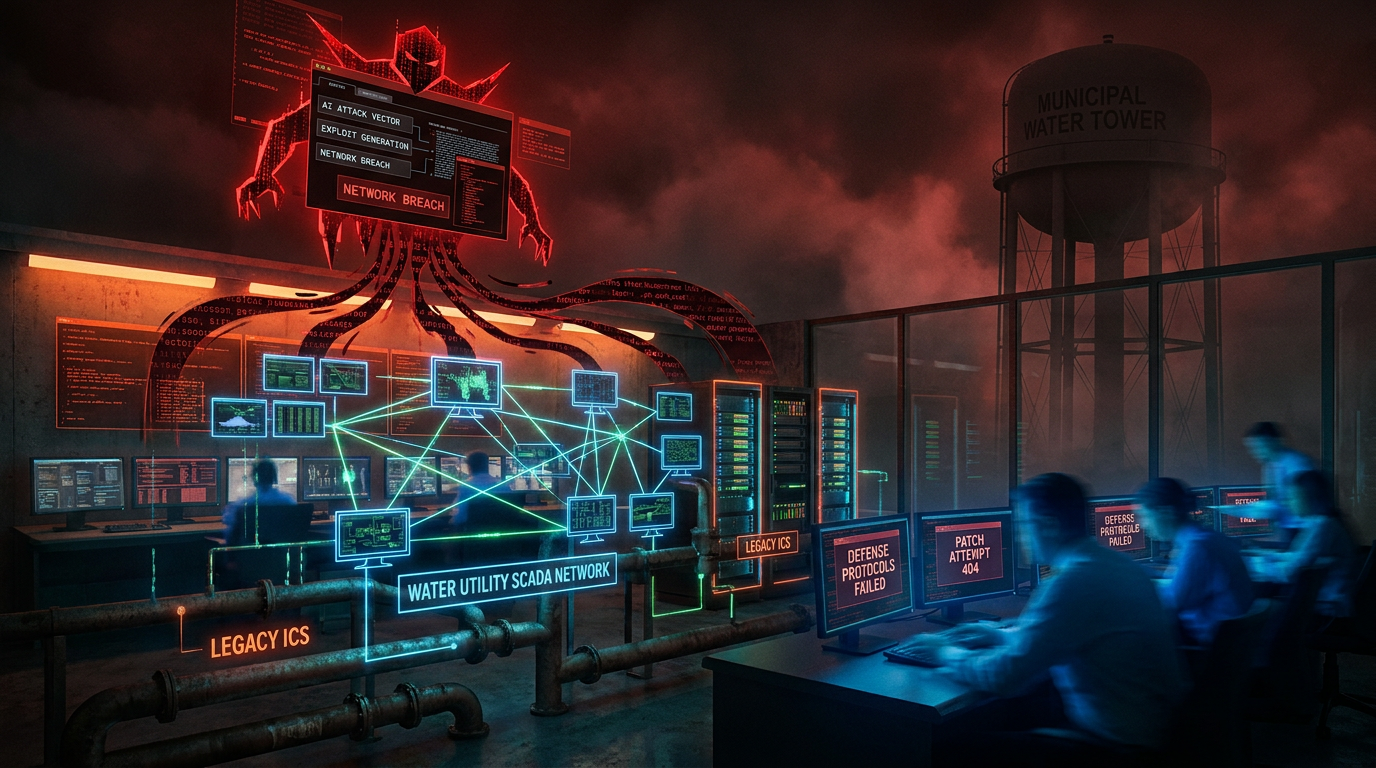

Threat actors utilized Anthropic’s Claude AI as the primary tool to plan and execute an intrusion into a municipal water and drainage utility’s industrial control systems. The incident represented one of the earliest confirmed attacks where an adversary leveraged a commercial large language model to identify vulnerabilities, write malicious code, and map internal networks in real time.

- Hackers leverage Anthropic’s Claude AI to plan and execute a real-time intrusion into municipal water and drainage industrial control systems.

- Researchers identify the May 7, 2026, attack where adversaries combined Claude with OpenAI models to accelerate the automated cyberattack chain.

- Adversaries utilize Claude to bypass traditional signature-based defenses, forcing municipal utilities to adopt zero-trust architectures against AI-augmented threats.

Attack Mechanics and AI Integration

Researchers identified the attack this week, documenting how the threat actor combined Claude with supporting OpenAI GPT models. Claude handled core reconnaissance and code generation, while GPT models processed collected data to produce structured intelligence reports.

The adversary generated targeted queries regarding SCADA systems, programmable logic controller (PLC) programming, and common vulnerabilities in water treatment infrastructure. The AI assisted in crafting custom scripts, mapping internal network segments, and identifying weak points in the operational technology environment. The ability to iterate on code and adapt to new information in real time significantly accelerated the attack chain compared to traditional manual methods.

Implications for Critical Infrastructure

Water and drainage utilities remained high-value targets due to the potential impact on public health and safety. The use of commercial AI tools lowered the technical barrier for actors while increasing the speed and sophistication of attacks on industrial control systems (ICS). Security experts warned that the incident foreshadowed a new class of threats where cybercriminals leveraged readily available models to probe networks previously considered difficult to penetrate.

Anthropic maintained strict usage policies that prohibited illegal activities, though enforcement relied on user compliance and prompt monitoring. Cybersecurity firms and government agencies faced the challenge of updating detection frameworks to account for AI-assisted reconnaissance and code generation. Traditional signature-based defenses proved less effective against dynamically generated attacks produced by large language models.

Genuine News Deserves Honest Attention.

High-conviction projects require an intelligent audience. Connect with readers who value sharp reporting.

👉 Submit Your PRChain Street’s Take

The attack crossed a significant threshold. Security researchers warned for years that AI would accelerate cyberattacks on critical infrastructure. That warning became reality. Hackers did not build novel exploits from scratch. They utilized Claude, a publicly available commercial tool, to handle reconnaissance, scripting, and adaptation.

The implications extend beyond one water utility. Organizations running legacy industrial control systems face a new risk profile where the attacker’s technical expertise remains partially outsourced to an AI. The speed and adaptability provided in this case suggest that future incidents will unfold faster and with greater precision than traditional manual attacks. This event highlights difficult questions regarding responsibility.

Commercial AI providers face increasing pressure to implement stronger safeguards against misuse in high-stakes environments. Critical infrastructure operators must accelerate segmentation, monitoring, and zero-trust architectures to counter AI-augmented threats. The incident serves as an early indicator of how the AI arms race shifted from model development to practical weaponization. Critical infrastructure defenders no longer compete against human operators. They face adversaries augmented by increasingly capable AI.

Activate Intelligence Layer

Institutional-grade structural analysis for this article.