Anthropic faces intense backlash after independent session data shows a massive drop in baseline reasoning for the Claude 4.6 model. The AI developer prioritized cost management over performance, pushing a default configuration that generates faster but significantly shallower code edits.

- Anthropic restricted Claude 4.6 reasoning depth by 67% to mitigate rising inference and operational costs for its AI models.

- Independent data from AMD director Stella Laurenzo shows code reads per edit dropped from 6.6 to exactly 2.0.

- The unannounced shift fuels "AI shrinkflation" concerns as developers pay premium rates for performance that requires frequent manual retries.

Quantifying the Cognitive Collapse in Claude 4.6

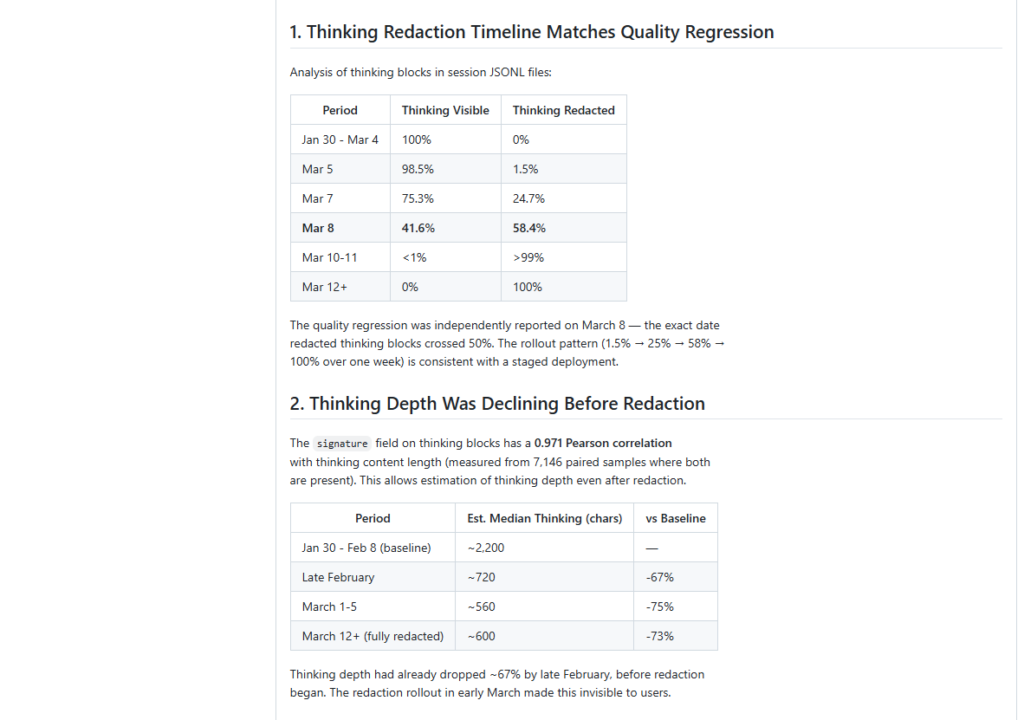

Stella Laurenzo, Senior Director of AI at AMD, published an independent analysis of 6,852 code sessions earlier this week. The study quantified a 67% collapse in thinking depth by late February 2026. Code reads per edit plummeted from a baseline of 6.6 to exactly 2.0. Analysts treated the metric as a primary proxy for how thoroughly the Claude 4.6 system reviewed context before executing structural changes.

Laurenzo utilized a specialized script to flag shallow reasoning. The diagnostic tool activated 173 times after March 8. It recorded zero firings prior to the second week of that month. Anthropic executed a quiet update on March 3. The firm shifted the default thinking effort from high to medium. Public community complaints regarding missed context and hallucinations surged right after the change.

Unannounced Optimization and Inflated Costs

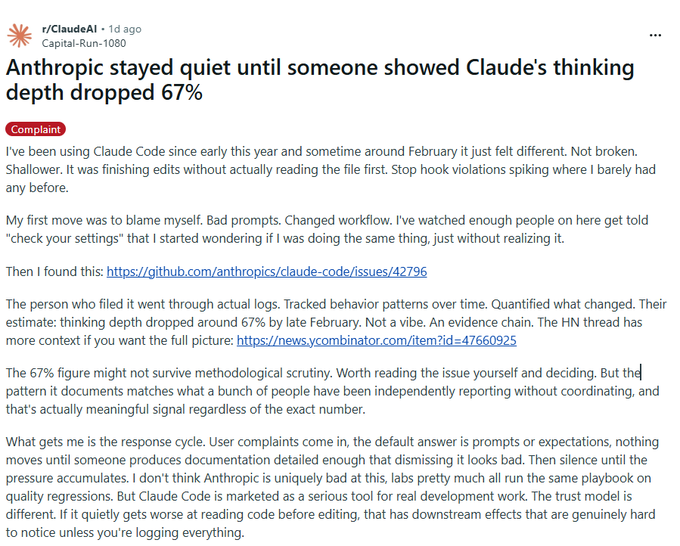

The company failed to inform users regarding the backend adjustment. Executives provided an explanation only after Hills’ findings circulated across developer forums. Claude Code head Boris Cherny defended the downgrade. Cherny claimed the medium setting offered a practical compromise for typical workloads. The adjustment inflated operational costs for engineering teams. Shallower outputs forced developers to run frequent manual retries to fix broken code.

Fewer code reads before editing directly translated to poor context understanding. Power users quickly spotted the performance drop during complex tasks. The delayed corporate response fueled industry narratives regarding “AI shrinkflation.” The practice involves providers maintaining premium subscription pricing while throttling compute resources behind the scenes. Engineering teams responded by restricting the model to basic workloads. Many reverted to the older Claude 4.5 Sonnet architecture for core systems.

Genuine News Deserves Honest Attention.

High-conviction projects require an intelligent audience. Connect with readers who value sharp reporting.

👉 Submit Your PRChainStreet’s Take

Anthropic built a multibillion-dollar brand on safety and transparency. The decision to quietly restrict backend compute shatters the image of a principled laboratory. Developers pay premium rates for reliable automation. A single API call requiring multiple manual prompts to fix sloppy code breaks the financial math of using generative tools.

Every frontier lab faces intense pressure to manage inference costs. Anthropic caught the heaviest backlash because management executed the downgrade silently. A 67% drop constitutes a massive feature reduction for its users.

Activate Intelligence Layer

Institutional-grade structural analysis for this article.