You no longer need expensive API credits to run powerful autonomous agents. Builders now deploy multi-agent teams that research markets, execute trades, or manage data, all without monthly bills.

- Developers deploy local AI agents using Ollama and CrewAI to eliminate recurring API subscription costs for autonomous workflows.

- Running a Mistral 7B model requires 8GB RAM, reducing monthly operational expenses from $550 to roughly $30 in electricity.

- Local deployments prioritize data privacy but face higher hallucination risks compared to closed-source models like GPT-4 during complex financial tasks.

Local setups, open-source frameworks, and on-chain toolkits make it possible. You keep full control, cut costs to near zero, and even let agents act directly on blockchain.

Local Agents: Zero Infrastructure Cost

Ollama lets you run large language models on your own machine.

Download it from ollama.com, pull a model, and start.

| Bash ollama pull mistral ollama serve |

The API runs at localhost:11434. You get free access to models like Llama 3.3, Mistral, Qwen, and GLM-4. No keys. No usage limits.

Genuine News Deserves Honest Attention.

High-conviction projects require an intelligent audience. Connect with readers who value sharp reporting.

👉 Submit Your PRHardware needs stay modest for most work. A 7B model runs fine on a laptop with 4-8GB RAM. Move to 30B models and you want 16GB RAM plus a GPU. 70B models need 24GB+ VRAM on high-end cards.

CrewAI: Easy Multi-Agent Teams

CrewAI connects directly to Ollama and handles role-based agents with almost no hassle.

It ships with over 100 pre-built tools for web search, code execution, and more. Many builders pick it for quick testing because it stays independent of heavier LangChain setups.

A basic setup looks like this:

| Python from crewai import Agent, Task, Crew from langchain_community.llms import Ollama llm = Ollama(model=”mistral”) researcher = Agent(role=”Researcher”, llm=llm) # define tasks and kick off the crew crew = Crew(agents=[researcher], tasks=[…]) result = crew.kickoff() |

The trade-off shows up fast. Smaller local models like Mistral 7B hallucinate more often than GPT-4. They work great for high-volume tasks but need extra checks on critical decisions.

LangGraph: Stateful and Complex Workflows

When your agents need real memory and multi-step reasoning, LangGraph shines. It builds graph-based workflows that stay completely free and support streaming responses.

Low-Code Option: n8n

If you want to skip heavy coding, n8n offers visual workflows with over 1000 integrations. You self-host it for free. Many non-coders use it to connect agents to email, calendars, databases, and APIs without writing much Python.

LM Studio gives you a clean desktop GUI for browsing and running models if the terminal feels intimidating.

On-Chain Agents: Let Them Act on Blockchain

You can give agents their own wallets so they monitor balances, swap tokens, or provide liquidity without you babysitting every step.

Solana Agent Kit stands out for Solana users. It includes 60+ ready actions and works with any LLM or framework. Your agent can check balances, transfer SOL, stake, or interact with any program on the network. Gas fees stay tiny, often under a cent per transaction.

GOAT Toolkit covers multiple chains in one setup, including Solana and Ethereum. Coinbase AgentKit focuses more on Ethereum, Polygon, and Base with clean wallet integration.

Real Example: A Simple Monitoring Agent

You want an agent that watches a Solana wallet and alerts you if the balance drops below $100.

Stack it with Ollama (Mistral 7B), Solana Agent Kit, LangGraph, and a free public RPC. Total cost stays at zero if you already own the hardware.

A basic script runs in about 50 lines of code. Once started, it keeps going 24/7 on your machine.

The Real Limitations

Local setups only feel free if you already own decent hardware. Renting GPUs can quickly cost more than API credits.

Model quality still trails the best closed models. Local versions hallucinate more, especially on complex or fast-moving topics. Many builders run hybrid systems: local agents for routine work, paid APIs only when accuracy matters most.

Security becomes critical once agents touch real money. Never hand over full private keys. Use session keys with strict limits, daily spend caps, recipient whitelists, and test everything on testnet first. One slip can drain a wallet.

Single-machine setups handle hobby projects well. For reliable 24/7 production work, you eventually add orchestration layers that push costs to $50-200 per month.

Cost Comparison (24/7 for One Month)

- Pure local (Ollama + CrewAI): $15-30 in electricity

- Local + Solana actions: $20-50 (electricity + tiny gas)

- Local + Ethereum actions: $65-230 (electricity + higher gas)

- Paid API route (heavy GPT-4 usage): $100-550+

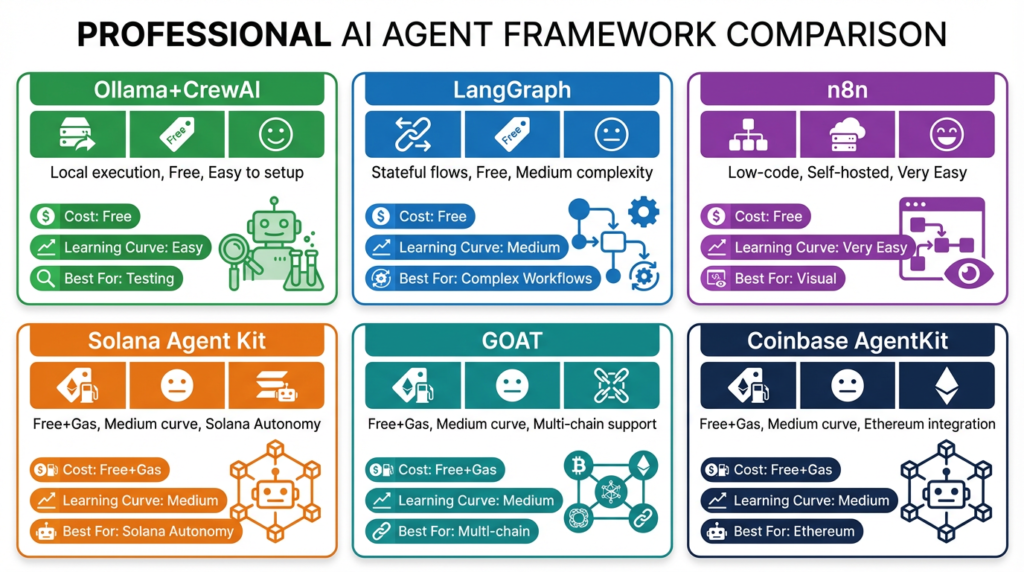

Framework Quick View

- Ollama + CrewAI: Local, free, easy learning curve, great for testing

- LangGraph: Free, medium learning, best for complex stateful agents

- n8n: Self-hosted low-code, very easy, ideal for visual workflows

- Solana Agent Kit: Free framework + gas, good for Solana-focused autonomy

- GOAT: Free framework + gas, strong for multi-chain work

- Coinbase AgentKit: Free framework + gas, Ethereum-focused

Security Checklist Before Going Live On-Chain

- Use session keys instead of full private keys

- Enforce daily transaction limits

- Configure recipient whitelists

- Test fully on testnet

- Set up monitoring and failure alerts

- Fund the wallet with only what you can afford to lose

- Never hardcode secrets in your code

Builders who follow these steps cut their monthly AI costs dramatically while gaining privacy and direct on-chain control. The tools exist today. The only real barrier left is learning the setups and respecting the security realities.

Activate Intelligence Layer

Institutional-grade structural analysis for this article.